Everyone is being told to “add AI” to operations. The problem is most advice skips the boring part: which workflows actually get easier, and which ones turn into a risk magnet.

LLMs are great at language work: reading, writing, summarizing, mapping messy inputs into a clean draft. They are not great at being trusted hands in production.

This guide is about putting LLMs where they reduce toil today, and keeping them away from the places where they create new failure modes.

Executive summary

· LLMs reduce toil when the work is mostly reading and writing, and the output can be validated quickly.

· They disappoint when you ask them to do open-ended root cause analysis, make risky changes, or operate without tight guardrails.

· The winning pattern is Draft -> Validate -> Execute, with humans owning the final action and the rollback plan.

· Start with one workflow, one source of truth, and one definition of done. Then expand.

· Treat every LLM output as a draft, not a fact. Make verification part of the workflow, not a moral lesson.

The right mental model

Think of an LLM like a fast junior analyst who can read 30 tabs without complaining, then write a clean first draft.

They are useful because they compress time, not because they replace judgment.

If you hand them production credentials and ask them to “fix it”, you have built a new outage generator.

A practical rule: If the task ends with an irreversible action, the LLM should stop one step earlier.

The toil-fit test (use this before you build anything)

Before you automate, run the workflow through three questions:

· Is the input mostly text, logs, tickets, or metrics that can be pasted or retrieved from a known system?

· Can a human validate the output in under 2 minutes using a trusted source (query, dashboard, runbook, diff)?

· If the output is wrong, is the blast radius low (drafts, summaries, suggestions) or high (changes, deletes, policy denies)?

If you answer Yes, Yes, Low blast radius, the LLM will probably reduce toil. If not, keep it as a helper, not an operator.

Where LLMs help vs where they usually don't

Use this as a quick filter. The “help” column assumes you still validate outputs with a source of truth.

Best-fit (toil drops) | Maybe (depends on guardrails) | Usually not (risk or noise) |

Ticket and alert summarization | Triage recommendations | Hands-free remediation |

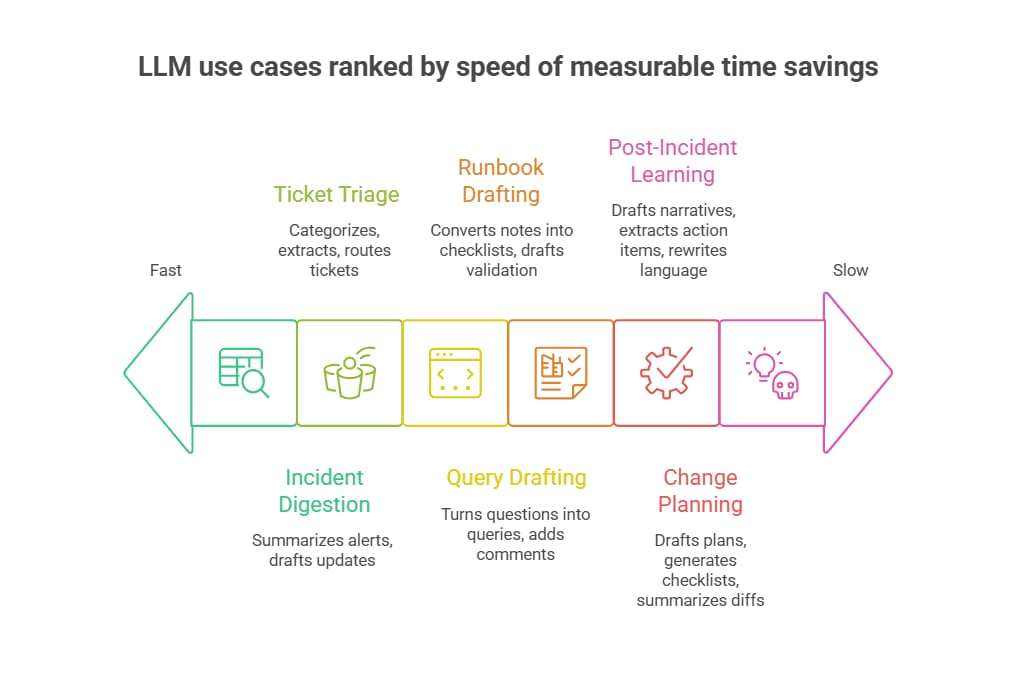

Use cases that pay off fast

These are the workflows where I consistently see measurable time savings without raising risk. Start here.

1) Incident and alert digestion

When you are on call, the pain is rarely the alert itself. It's the context switching: what changed, what is impacted, and what you already tried.

Where the LLM helps:

· Summarize noisy alert payloads into: symptom, scope, time window, and likely dependencies.

· Convert chat logs into a clean handoff note for the next engineer.

· Draft a customer-facing status update that is factual and calm.

Guardrails:

· Only feed it data you would paste in a ticket anyway.

· Require it to cite the exact lines or fields it used from the alert or log excerpt.

· Never let it “decide” severity. Have it propose a severity and list the evidence.

Example prompt (copy and paste):

You are helping with on-call triage. Use only the data I provide below.

Return:

1) One-sentence symptom

2) Scope (what is affected, what is not)

3) Timeline (key timestamps)

4) Top 3 hypotheses with evidence from the text (quote the lines)

5) Next 5 checks, ordered by fastest to validate

Do not guess. If evidence is missing, say what is missing.

DATA:

<paste alert payload, snippets, metrics here>2) Ticket triage and routing

Most ticket queues rot because nobody can read everything fast enough. LLMs are good at the first pass: categorize, extract, and route.

Where the LLM helps:

· Normalize tickets into a standard intake format (what, where, impact, urgency, requested deadline).

· Suggest the owning team based on keywords and known services.

· Detect missing prerequisites and ask the right follow-up questions.

Guardrails:

· Use a small, explicit routing map (service -> owning team). Do not let it invent owners.

· Require it to output a confidence score and the reason.

· If confidence is low, route to a human triage lane, not a random team.

Example intake template output:

Ticket summary:

- Request type:

- Environment:

- Service / resource:

- Business impact:

- Deadline / change window:

- What changed recently:

Missing info to request:

- ...

Suggested owner (from routing map):

- Team:

- Confidence:3) Query drafting for ops visibility (KQL, Resource Graph, SQL)

Query writing is a perfect LLM task because you can validate it quickly. Either the query runs and returns expected results, or it doesn't.

Where the LLM helps:

· Turn a question into a first-pass KQL or Azure Resource Graph query.

· Add comments, parameters, and filters so the query can be reused.

· Explain what each query step is doing, in plain language.

Guardrails:

· Always run queries read-only first.

· Have the LLM output assumptions (table names, columns, time ranges) so you can correct them.

· Keep a small library of vetted queries and prefer modification over net-new creation.

Example prompt (copy and paste):

Write a KQL query for Azure Log Analytics.

Goal: <describe what you need>

Constraints:

- Time range: last 24 hours

- Must return: <fields>

- Exclude: <noise>

If you are unsure of table or column names, show 2 alternatives and tell me how to verify.4) Runbook drafting and maintenance

Runbooks fail because nobody updates them. LLMs can turn raw notes into a clean runbook, and they can keep the format consistent.

Where the LLM helps:

· Convert a messy incident timeline into a repeatable checklist.

· Draft validation steps and expected outputs.

· Generate a rollback section and the 'stop conditions' for safety.

Guardrails:

· Require sources: link the runbook to the exact dashboards, queries, and commands it depends on.

· Treat runbooks like code: review, version, and test them.

· Never ship a runbook that has not been executed at least once in a safe environment.

5) Change planning and safety checks

LLMs are useful before the change, not during the blast radius. They can help you write a plan that is harder to misunderstand.

Where the LLM helps:

· Draft a change plan with prerequisites, validation, and rollback.

· Generate a checklist for change windows and communications.

· Turn a diff into a plain-language summary for stakeholders.

Guardrails:

· Do not let the LLM be the approver. It can suggest risks, but humans own sign-off.

· Always include validation queries and a rollback plan.

· If the change cannot be rolled back, require a canary or staged rollout.

Example change-plan skeleton:

Change goal:

Scope:

Risk level:

Pre-checks (must pass before change):

Execution steps:

Validation steps (with exact commands/queries):

Rollback steps:

Communication plan:

Owner:6) Post-incident learning

Postmortems are writing-heavy and time-sensitive. LLMs can draft the first version while the context is fresh.

Where the LLM helps:

· Turn a timeline into a narrative with clear sections: what happened, impact, detection, response, and follow-ups.

· Extract action items and assign owners, based on the notes you provide.

· Rewrite blamey language into factual language.

Guardrails:

· Keep it factual. No invented root cause, no invented numbers.

· Separate hypotheses from verified findings.

· Have an engineer review the technical accuracy before publishing.

Where LLMs don't reduce toil (and why)

This is the part most teams learn the hard way. LLMs can sound confident even when they're wrong, and ops is a place where “close enough” is still a failure.

1) Unbounded root cause analysis

If you ask an LLM to explain an outage across ten systems with incomplete telemetry, it will fill gaps. That feels helpful until it sends you down the wrong path.

· Better: have it propose hypotheses tied to specific evidence you provide.

· Best: use it to write the query or checklist that finds the missing evidence.

2) Hands-free remediation in production

Autonomous changes are tempting because they look like the end of toil. In practice, they are where toil becomes outages.

· LLMs are good at drafting the remediation plan and the rollback plan.

· Humans (or tightly controlled automation) should execute the action.

3) Security boundary decisions

Access control, data classification, and incident response decisions require context that is rarely present in a prompt. Treat the model as a helper for documentation, not a judge.

· Use it to summarize policy requirements into an engineer-readable checklist.

· Do not use it to approve exceptions or interpret ambiguous risk without a human owner.

4) “Truth” tasks without verification

Anything that requires being correct, not just plausible, needs a verification step. LLMs should point you to the check, not replace it.

· Examples: compliance evidence, billing accuracy, exact inventory counts, and entitlement reviews.

· Use the model to generate the query, then trust the query result.

The safe deployment pattern:

Draft -> Validate -> Execute

If you adopt only one idea from this guide, make it this workflow. It keeps speed, and it keeps control.

· Draft: LLM generates a summary, a query, a plan, or a runbook section.

· Validate: a human verifies against a source of truth (logs, dashboards, cost export, infra state, code diff).

· Execute: the action is performed by a human or by automation that is already governed (CI/CD, policy, runbooks).

If you cannot name the validation step, you are not ready to automate that workflow.

Guardrails that keep you out of trouble

These guardrails are boring. That's why they work.

· Scope control: define what systems, subscriptions, and environments the workflow is allowed to talk about.

· Data boundaries: do not paste secrets. Redact tokens, keys, and customer data.

· Source of truth: pick one system that “wins” for each workflow (ticketing, monitoring, inventory, cost).

· Output format: require structured output (sections, bullets, fields). Free-form answers drift.

· Confidence and uncertainty: require the model to say what it does not know and what to verify.

· Audit trail: store the prompt, the output, and the validation evidence (even if it's just a link to the query run).

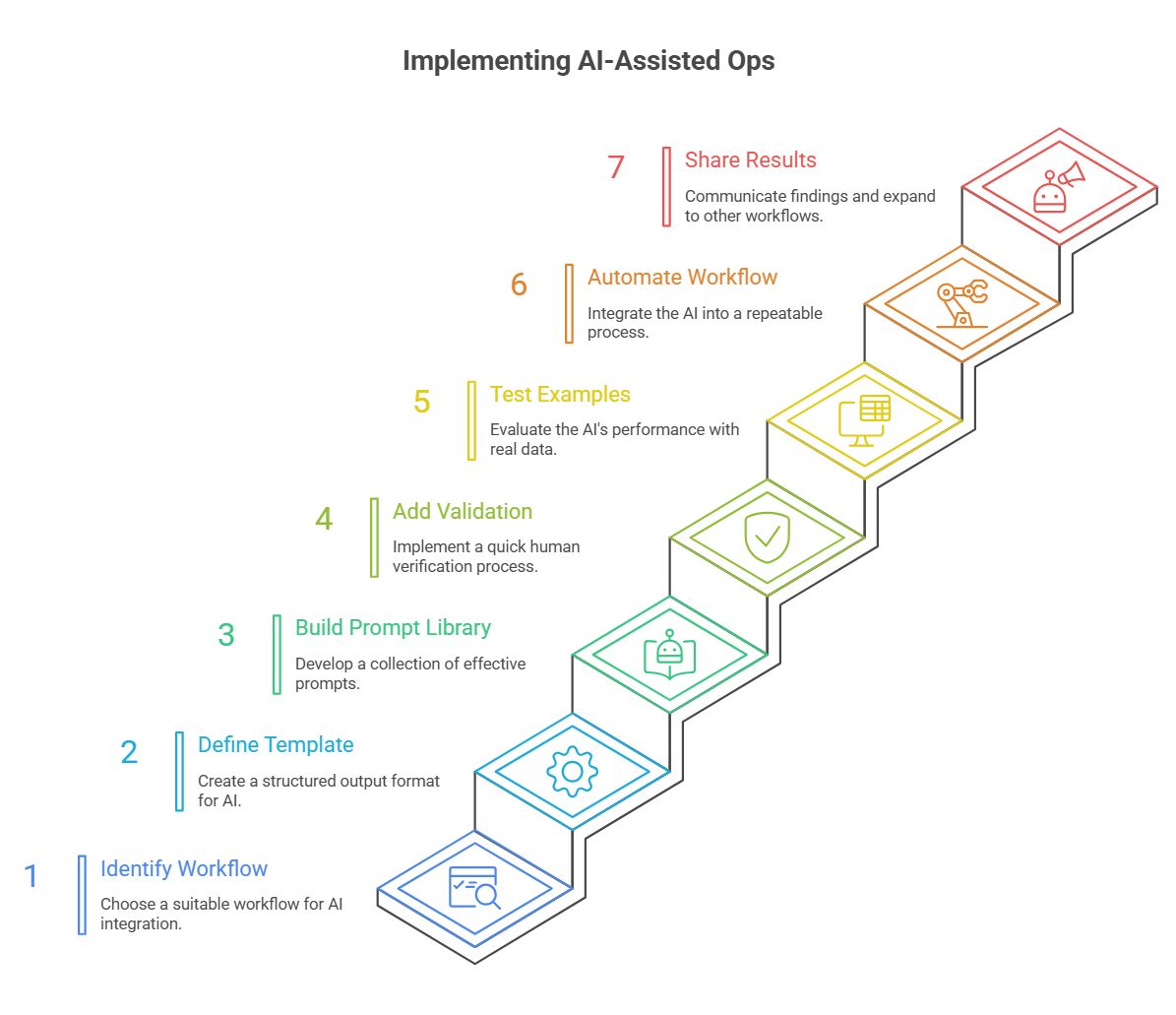

A 7-day starter plan (low drama, high signal)

If you want quick wins without boiling the ocean, run this sequence.

· Day 1: Pick one workflow: incident digestion, ticket normalization, or query drafting.

· Day 2: Define a template output and a definition of done (what makes the output usable).

· Day 3: Build a small prompt library with 3 to 5 copy-paste prompts for that workflow.

· Day 4: Add a validation step that takes under 2 minutes and document it.

· Day 5: Test it on 10 real examples. Track time saved and failure modes.

· Day 6: Turn the best prompt into a repeatable runbook section or ticket macro.

· Day 7: Share results. Expand to one adjacent workflow only after the first one is stable.

Closing thought

AI-assisted ops works when you treat the model like a drafting tool and the platform like the truth. Put the model in the loop, not in charge.

Want the quick version?

Click here to stop TOIL and I'll send the starter pack you can drop into your on-call playbook.

If you're building this inside Azure, the same pattern applies: draft queries, validate against telemetry, then ship automation through policy and CI/CD so the guardrails are real.