If your “AI Ops strategy” sounds like “a bot that runs production,” you’re aiming at the wrong target. That story is comforting because it promises less work. It’s also how teams end up with automations nobody trusts, alerts nobody believes, and incidents that are harder to explain.

AIOps done well is boring in the best way. It helps you ask sharper questions, faster, with real context. Then it helps you automate the safest part of the response, with guardrails. Not hero-bot vibes. Not black-box changes at 2 a.m.

The real job of AIOps

Operations is a loop: observe → decide → act → learn. AIOps is just tooling that makes that loop tighter without increasing risk.

Observe: turn messy signals into a short list of “something changed” candidates.

Decide: surface the best next questions, not a confident guess.

Act: automate low-risk, reversible steps first.

Learn: capture evidence, outcomes, and ownership so you get better every week.

Operator rule If it can’t explain the “why” in plain language, it doesn’t get write access. If it can’t prove blast radius, it doesn’t get to run unattended. If it can’t produce evidence after the fact, it’s not operations. It’s theater. |

Better questions beat smarter dashboards

Most teams don’t have an “AI problem.” They have a question-quality problem. When something looks wrong, the first 15 minutes are usually wasted on guesswork.

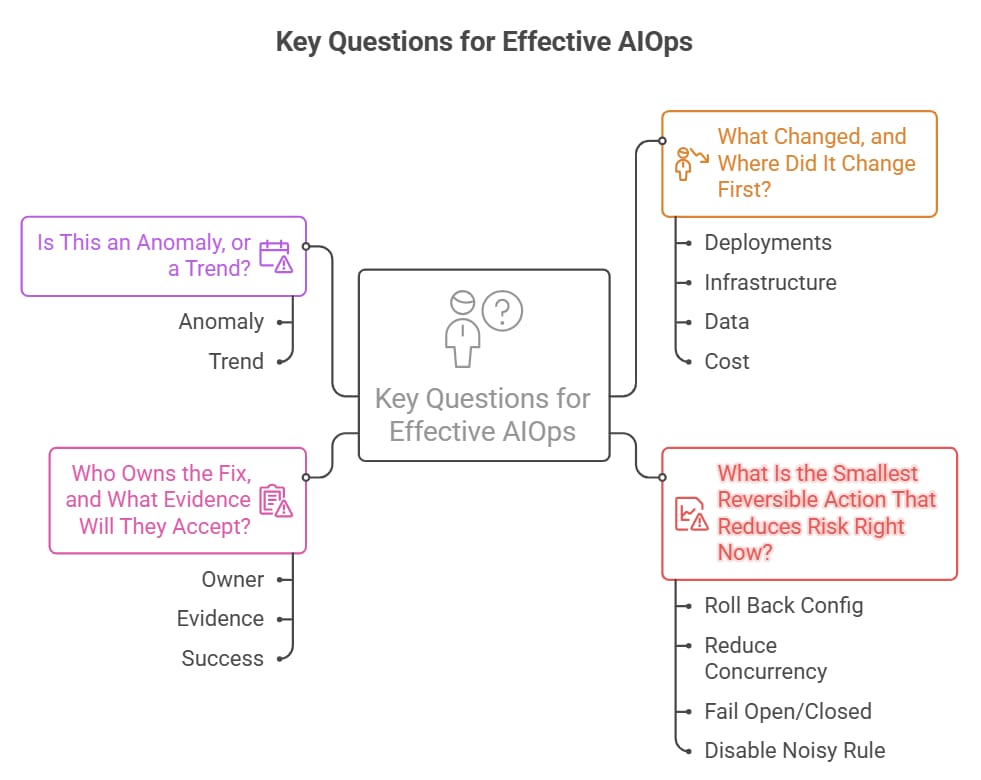

Here are the questions that actually move the needle.

1) What changed, and where did it change first?

Deployments: new release, config change, feature flag flip.

Infrastructure: scaling event, node replacement, route/DNS change, certificate rotation.

Data: index rebuild, stats update, backfill job, upstream schema tweak.

Cost: new resource class, higher request volume, a silent SKU drift.

2) What is the smallest reversible action that reduces risk right now?

Roll back a config, not a whole release.

Reduce concurrency, not capacity.

Fail open or fail closed on one endpoint, not the entire system.

Disable the noisy rule, not the entire detection pipeline.

3) Who owns the fix, and what evidence will they accept?

Owner: team, on-call, or platform group—name it.

Evidence: logs, traces, change record, cost deltas, error budget impact.

Success: a clear “done” signal (p95 back to baseline, error rate normal, spend trend stable).

4) Is this an anomaly, or a trend?

Anomaly: a spike with a known trigger and a clear end.

Trend: a slope that keeps climbing (cost drift, latency creep, gradual saturation).

If it’s a trend, your response should be a control, not a one-time patch.

Safer automation: the 3-layer pattern

Automation is where most AIOps programs earn distrust. The trick is not “more automation.”

It’s safer automation in layers.

Layer 1 — Nudges (read-only): summarize what changed, who owns it, and what to check next.

Layer 2 — Guarded actions (low blast radius): run reversible fixes with strict scope limits.

Layer 3 — Hard stops + escape hatches: block only high-risk mistakes, and provide a documented, time-bound exception path.

When teams say they hate governance, what they often mean is: “I got blocked with no fastest fix.” Safer automation is the same idea. If the system can’t tell you the fastest safe move, it shouldn’t be clicking buttons.

A practical AIOps workflow on Azure (without the hype)

You don’t need a brand-new platform to start. You need a repeatable pipeline that turns signals into decisions, and decisions into controlled actions.

Signal sources

Operational signals: Azure Monitor metrics + Log Analytics (KQL), app logs, traces, and platform events.

Change signals: CI/CD deployments, infrastructure-as-code changes, policy assignments, and configuration drift.

Cost signals: Azure Cost Management exports, tags, and resource inventory context from Azure Resource Graph.

Security signals: identity events, Defender alerts, and audit trails.

The “question engine”

This is the part most teams skip. Don’t. Turn an alert into a short, structured prompt your on-call can trust.

What changed in the last N minutes? (deployment, config, infra, data)

What’s the blast radius? (services, regions, subscriptions, customers)

What’s the current impact? (SLO breach, error budget burn, cost spike)

What’s the safest next action? (reversible, scoped, logged)

Who owns it and where does the ticket go?

Automation surfaces

Runbooks for reversible ops: restart a single instance, scale within bounds, clear a stuck queue, rotate a secret the right way.

Approval-required actions: anything that can cause data loss, security exposure, or broad downtime.

Policy guardrails: prevent known-bad configurations from existing in the first place.

The minimum safety kit

Write permissions are rare. Read permissions are common.

Use managed identity where possible; keep secrets out of scripts.

Every action emits evidence: who, what, when, why, and result.

Add a time-bomb: auto-disable automations that exceed error thresholds.

Document the escape hatch: ticket + owner + expiry.

Common failure modes (and how to avoid them)

Black-box recommendations: if you can’t see inputs, you won’t trust outputs.

Automation without ownership: a bot can’t be on-call. Someone still has to own the outcome.

Overfitting to one incident: build patterns, not one-off magic.

Too much scope, too early: start with one service, one workflow, one measurable improvement.

No learning loop: if you don’t capture outcomes, you’ll repeat the same incidents with fancier tooling.

Quick checklist: ship AIOps without waking up the wrong people

Pick one use case with a measurable pain (noisy alerts, cost drift, incident triage time).

Define the top 5 questions you want answered in 60 seconds.

Start read-only: summarize context, changes, and probable owners.

Automate only reversible actions with strict bounds.

Add evidence: logs, tickets, and decision notes for every action.

Introduce hard stops only for high-blast-radius mistakes, with an escape hatch.

Review weekly: what did the system catch, what did it miss, and what should be retired?

AIOps isn’t a bot you hand the keys to. It’s a disciplined loop: better questions, safer moves, clearer evidence. Do that well and the tools finally feel like a multiplier instead of another thing to babysit.

If you want to make this real in your environment, start with grabbing the Better Questions + Safe Automation” prompt pack for AIOps triage and controlled runbooks.