Right-sizing SQL is one of those jobs that looks simple until it isn't. Scale down and you risk timeouts, angry app owners, and a rollback at 2 a.m. Do nothing and the bill keeps drifting up.

This framework keeps you out of both traps. It is not a list of SKUs. It is a repeatable decision tree you can run every sprint: measure, classify the bottleneck, change one thing, and prove the outcome.

Framework at a glance

Start with the workload and the SLO, not the SKU.

Baseline for at least 7 days so you catch peaks, not just averages.

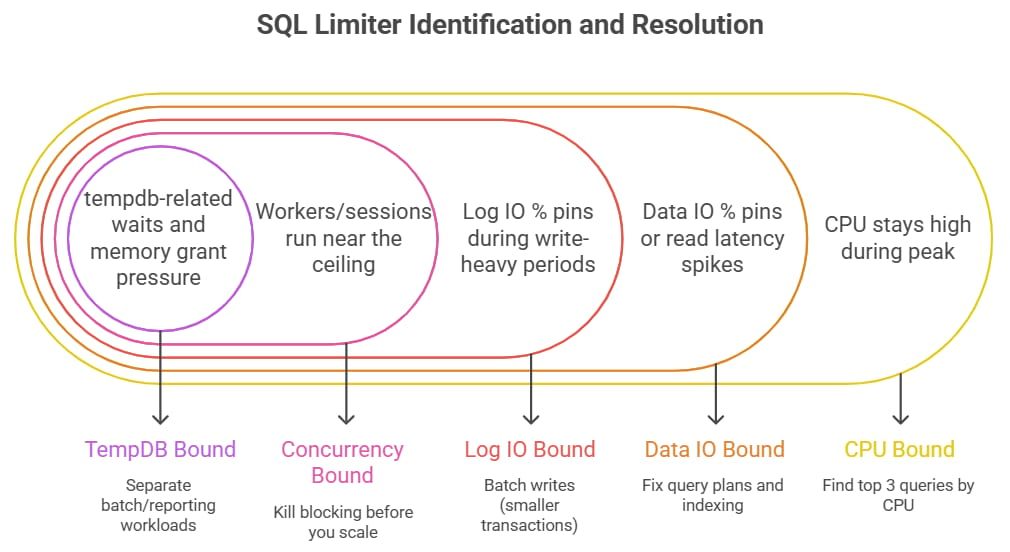

Decide based on the limiting signal (CPU, data IO, log IO, concurrency, tempdb, or storage).

Make one change at a time, with a rollback trigger defined before you make any modifications.

Treat right-sizing as an operating loop, not a one-time project.

Who this is for

Platform engineers, SREs, FinOps leads, and database owners who need to reduce spend without turning performance into a guessing game.

Step 0: Define 'safe' before you touch compute

Right-sizing is only safe if you agree on success criteria up front. Pick one primary SLO and one rollback trigger. Keep it simple.

Examples of good success criteria:

p95 app query latency stays within X% of baseline during peak hours

no increase in deadlocks/timeouts

CPU stays below a defined ceiling under peak load

Examples of rollback triggers:

p95 latency exceeds your threshold for more than N minutes

error rate/timeouts breach your SRE alert threshold

DTU/vCore saturation (or IO saturation) pins at 95-100% for sustained periods

Write these down in a change note. If you do not define rollback conditions, you will debate them in the middle of the incident.

Step 1: Baseline the right signals (the minimum set)

You do not need a perfect lab. You need a clean baseline that answers one question: what resource is the database begging for when it is slow?

Signal | Where to read it | Why it matters |

CPU % / vCore saturation | Azure Monitor metrics; sys.dm_db_resource_stats | High CPU with stable IO usually means compute-bound queries or high concurrency. |

Data IO % / read latency | Azure Monitor metrics; Query Store for heavy readers | If reads are slow, scaling compute may not help. You may be IO-bound or missing indexes. |

Log IO % / write latency | Azure Monitor metrics; log usage patterns | Heavy writes and slow log flush pushes you toward faster storage/tier or transaction tuning. |

Workers / sessions / concurrency | Azure Monitor metrics; sys.dm_exec_sessions; wait stats | If you run out of workers or see scheduler pressure, you need more compute or less blocking. |

Tempdb pressure | Waits related to tempdb; Query Store patterns; workload traits | Sorts, hashes, and temp tables can burn tempdb. Some tiers handle this better. |

Top queries by CPU/reads/writes | Query Store (runtime stats + plans) | Right-sizing without query awareness is gambling. Know what drives the peaks. |

Baseline window: aim for 7 to 14 days. If you only have 24 hours of data, treat every decision as higher risk.

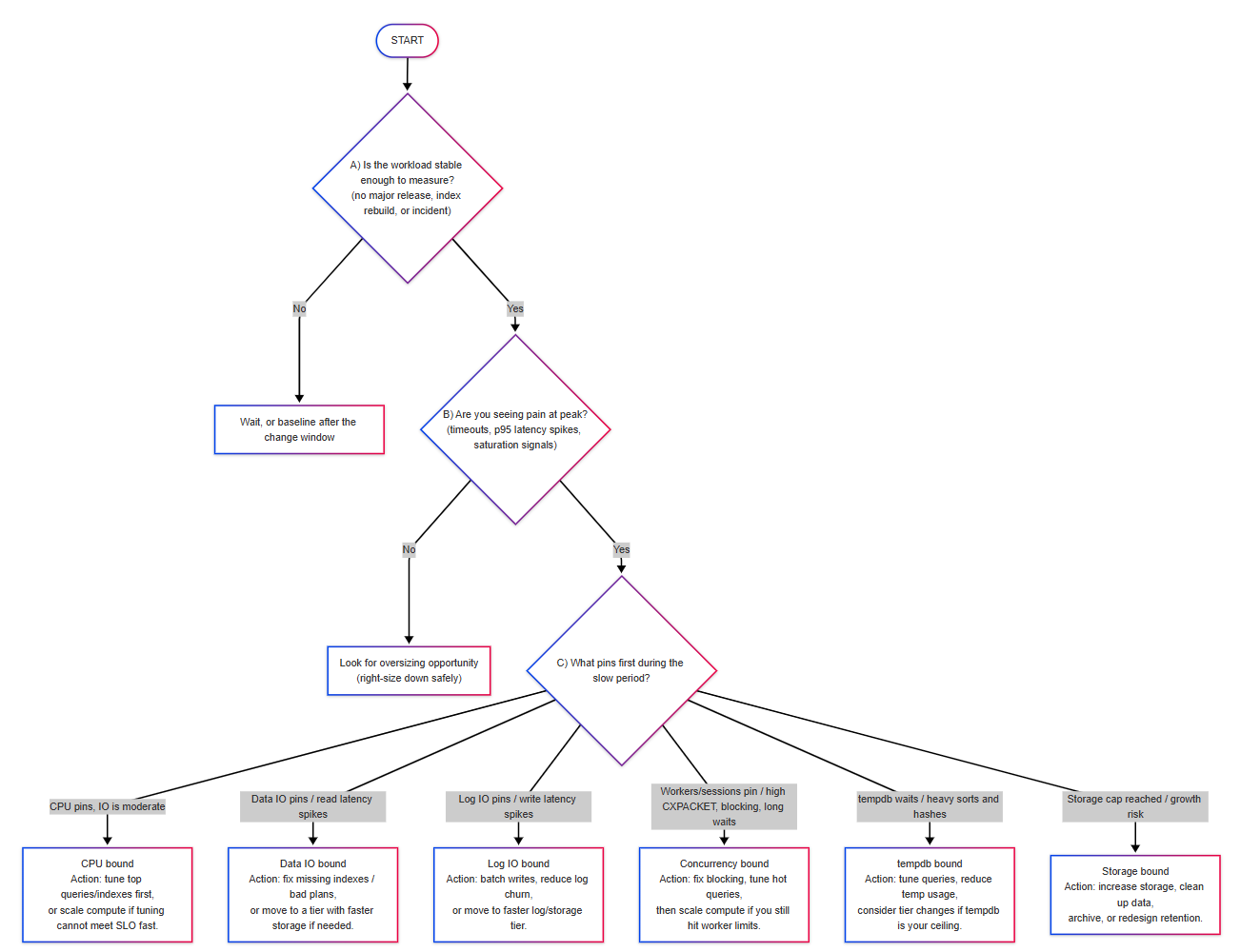

The decision tree

Use this in order. Do not jump to 'scale up' or 'move tiers' until you identify the limiting signal.

What to do for each limiter

1) CPU bound

Signals you typically see:

CPU stays high during peak, while Data IO and Log IO are not saturated

Top queries show high CPU time in Query Store

Waits suggest compute pressure more than IO pressure

First moves that usually work:

Find the top 3 queries by CPU in Query Store and fix the obvious: missing indexes, bad parameterization, scans on hot tables.

If the app has bursty peaks but a low average, consider serverless or scheduled scale up/down (if your workload tolerates it).

If tuning cannot meet the SLO in time, scale up one compute step, validate, then come back for tuning later.

2) Data IO bound

Signals you typically see:

Data IO % pins or read latency spikes during peak

CPU is not pinned, but requests are slow

Queries show high logical reads / physical reads

First moves that usually work:

Fix query plans and indexing before buying faster storage. A missing index can look like a tier problem.

Check for noisy scans caused by non-sargable predicates, implicit conversions, or stale stats.

If the workload truly needs higher read IOPS/latency, evaluate moving tiers (for example, to a tier with faster storage).

3) Log IO bound

Signals you typically see:

Log IO % pins during write-heavy periods

High write latency, long commit times, or transaction log pressure

Batch jobs that hammer a single hot table

First moves that usually work:

Batch writes (smaller transactions) and avoid long-running open transactions.

Reduce churn: avoid needless index maintenance during business hours, and watch for chatty app patterns.

If the write rate is real and sustained, evaluate a tier change where the log throughput is higher.

4) Concurrency bound

Signals you typically see:

Workers/sessions run near the ceiling; queueing and wait time rise

Blocking chains, lock waits, or high parallelism waits

Small queries get slow only when many users are active

First moves that usually work:

Kill blocking before you scale: identify the top blockers and fix the transaction pattern.

Tune the top wait categories (locks, latches, parallelism) and address the specific root cause.

If concurrency is simply higher than the compute can handle, scale compute after you remove obvious contention.

5) tempdb bound

Signals you typically see:

tempdb-related waits and memory grant pressure during sorts/hashes

Queries that spill to tempdb (Query Store shows spills or high temp usage patterns)

ETL/reporting workloads sharing the same database as OLTP

First moves that usually work:

Separate batch/reporting workloads from OLTP if possible (even by schedule).

Tune queries that spill, and reduce unnecessary temp tables.

If tempdb is consistently the ceiling, consider tier changes and/or more compute.

Right-size down without breaking things

Most cost wins come from scaling down. The trick is to do it like an operator: in small moves, with proof.

Downsize one step at a time (one compute size or one tier step).

Do it during a known low-traffic window, then observe during the next normal peak.

Compare before/after in Query Store: top query durations, regressions, and plan changes.

Keep the rollback plan simple: revert to the previous size and re-check the limiter signals.

If you are running an elastic pool, treat the pool as the unit of safety. Pool right-sizing works best when you also track per-database peak patterns so one noisy neighbor does not force an oversized pool.

Cost lens: do not confuse 'cheaper SKU' with 'cheaper outcome'

Right-sizing is about the total cost for a stable SLO. Sometimes that means scaling down. Sometimes it means scaling up a bit because it avoids a more expensive architecture problem.

If the workload is predictable and always on, reserved capacity can beat repeated micro-optimizations.

If the workload is spiky, scheduled scaling or serverless can reduce idle spend (but confirm your cold-start tolerance).

If you are in an elastic pool, savings often come from smoothing peaks and removing outliers, not just shrinking the pool.

Make it a loop: the 30-minute weekly right-sizing ritual

Pull last week's peak metrics and the top 10 queries from Query Store.

Mark the limiter for the slowest period (CPU, data IO, log IO, concurrency, tempdb, storage).

Pick one change: tune one query, add one index, or adjust one size.

Run the change with a defined rollback trigger.

Log the outcome (cost delta + performance delta) so you build trust and repeatability.

Closing thought

The fastest way to break SQL performance is to right-size by guesswork. The fastest way to reduce cost is to right-size by signals. If you run this decision tree consistently, your cost and your incidents both drift down.